One last Gold sponsor slot is available for the AI Engineer Summit in NYC. Our last round of invites is going out soon - apply here - If you are building AI agents or AI eng teams, this will be the single highest-signal conference of the year for you!

While the world melts down over DeepSeek, few are talking about the OTHER notable group of former hedge fund traders who pivoted into AI and built a remarkably profitable consumer AI business with a tiny team with incredibly cracked engineering team — Chai Research. In short order they have:

Started a Chat AI company well before Noam Shazeer started Character AI, and outlasted his departure.

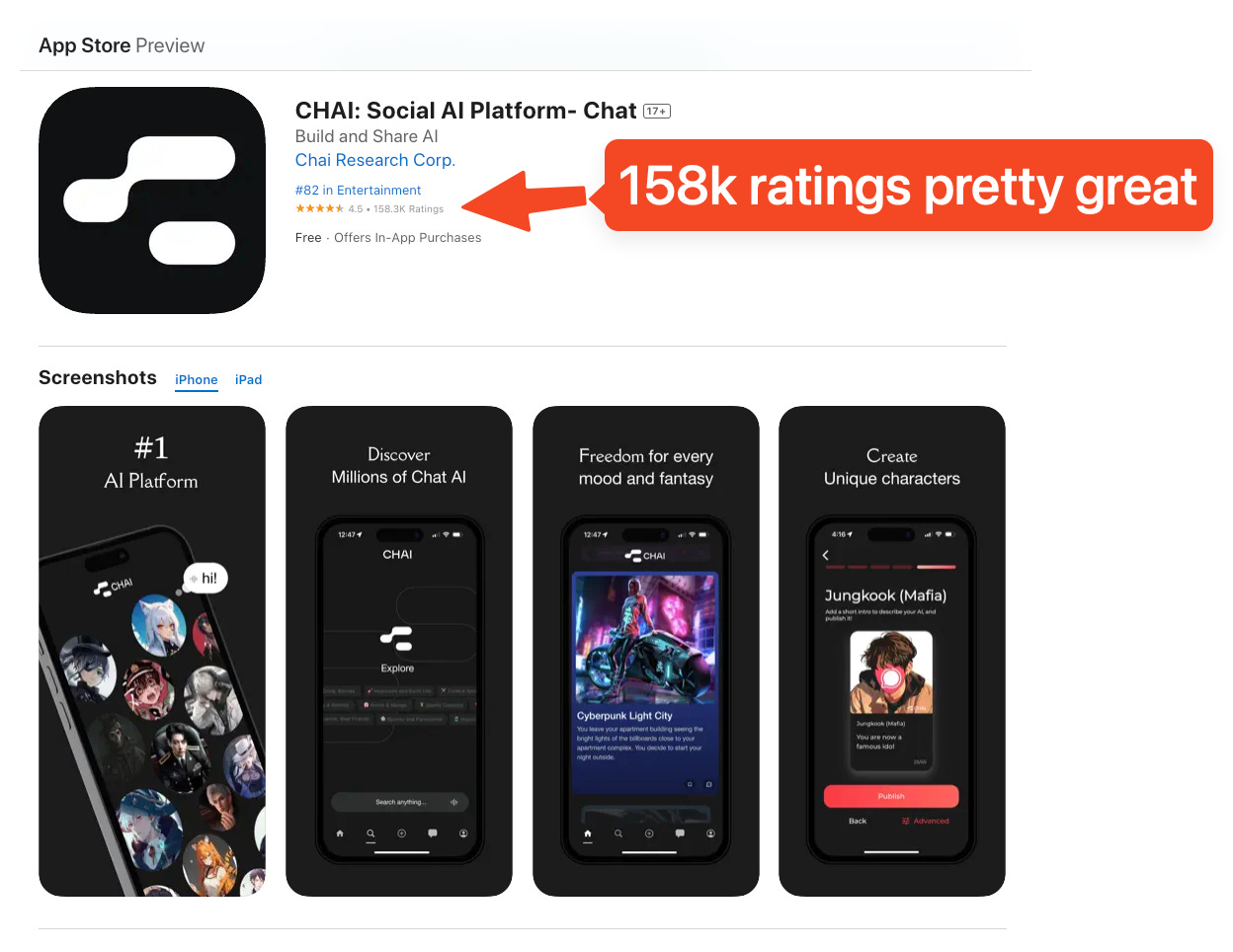

Crossed 1m DAU in 2.5 years - William updates us on the pod that they’ve hit 1.4m DAU now, another +40% from a few months ago. Revenue crossed >$22m.

Launched the Chaiverse model crowdsourcing platform - taking 3-4 week A/B testing cycles down to 3-4 hours, and deploying >100 models a week.

While they’re not paying million dollar salaries, you can tell they’re doing pretty well for an 11 person startup:

The Chai Recipe: Building infra for rapid evals

Remember how the central thesis of LMarena (formerly LMsys) is that the only comprehensive way to evaluate LLMs is to let users try them out and pick winners?

At the core of Chai is a mobile app that looks like Character AI, but is actually the largest LLM A/B testing arena in the world, specialized on retaining chat users for Chai’s usecases (therapy, assistant, roleplay, etc). It’s basically what LMArena would be if taken very, very seriously at one company (with $1m in prizes to boot):

Chai publishes occasional research on how they think about this, including talks at their Palo Alto office:

William expands upon this in today’s podcast (34 mins in):

Fundamentally, the way I would describe it is when you're building anything in life, you need to be able to evaluate it. And through evaluation, you can iterate, we can look at benchmarks, and we can say the issues with benchmarks and why they may not generalize as well as one would hope in the challenges of working with them. But something that works incredibly well is getting feedback from humans. And so we built this thing where anyone can submit a model to our developer backend, and it gets put in front of 5000 users, and the users can rate it.

And we can then have a really accurate ranking of like which model, or users finding more engaging or more entertaining. And it gets, you know, it's at this point now, where every day we're able to, I mean, we evaluate between 20 and 50 models, LLMs, every single day, right. So even though we've got only got a team of, say, five AI researchers, they're able to iterate a huge quantity of LLMs, right. So our team ships, let's just say minimum 100 LLMs a week is what we're able to iterate through. Now, before that moment in time, we might iterate through three a week, we might, you know, there was a time when even doing like five a month was a challenge, right? By being able to change the feedback loops to the point where it's not, let's launch these three models, let's do an A-B test, let's assign, let's do different cohorts, let's wait 30 days to see what the day 30 retention is, which is the kind of the, if you're doing an app, that's like A-B testing 101 would be, do a 30-day retention test, assign different treatments to different cohorts and come back in 30 days. So that's insanely slow. That's just, it's too slow. And so we were able to get that 30-day feedback loop all the way down to something like three hours.

In Crowdsourcing the leap to Ten Trillion-Parameter AGI, William describes Chai’s routing as a recommender system, which makes a lot more sense to us than previous pitches for model routing startups:

William is notably counter-consensus in a lot of his AI product principles:

No streaming: Chats appear all at once to allow rejection sampling

No voice: Chai actually beat Character AI to introducing voice - but removed it after finding that it was far from a killer feature.

Blending: “Something that we love to do at Chai is blending, which is, you know, it's the simplest way to think about it is you're going to end up, and you're going to pretty quickly see you've got one model that's really smart, one model that's really funny. How do you get the user an experience that is both smart and funny? Well, just 50% of the requests, you can serve them the smart model, 50% of the requests, you serve them the funny model.” (that’s it!)

But chief above all is the recommender system.

We also referenced Exa CEO Will Bryk’s concept of SuperKnowlege:

Full Video version

On YouTube. please like and subscribe!

Timestamps

00:00:04 Introductions and background of William Beauchamp

00:01:19 Origin story of Chai AI

00:04:40 Transition from finance to AI

00:11:36 Initial product development and idea maze for Chai

00:16:29 User psychology and engagement with AI companions

00:20:00 Origin of the Chai name

00:22:01 Comparison with Character AI and funding challenges

00:25:59 Chai's growth and user numbers

00:34:53 Key inflection points in Chai's growth

00:42:10 Multi-modality in AI companions and focus on user-generated content

00:46:49 Chaiverse developer platform and model evaluation

00:51:58 Views on AGI and the nature of AI intelligence

00:57:14 Evaluation methods and human feedback in AI development

01:02:01 Content creation and user experience in Chai

01:04:49 Chai Grant program and company culture

01:07:20 Inference optimization and compute costs

01:09:37 Rejection sampling and reward models in AI generation

01:11:48 Closing thoughts and recruitment

Transcript

Alessio [00:00:04]: Hey everyone, welcome to the Latent Space podcast. This is Alessio, partner and CTO at Decibel, and today we're in the Chai AI office with my usual co-host, Swyx.

swyx [00:00:14]: Hey, thanks for having us. It's rare that we get to get out of the office, so thanks for inviting us to your home. We're in the office of Chai with William Beauchamp. Yeah, that's right. You're founder of Chai AI, but previously, I think you're concurrently also running your fund?

William [00:00:29]: Yep, so I was simultaneously running an algorithmic trading company, but I fortunately was able to kind of exit from that, I think just in Q3 last year. Yeah, congrats. Yeah, thanks.

swyx [00:00:43]: So Chai has always been on my radar because, well, first of all, you do a lot of advertising, I guess, in the Bay Area, so it's working. Yep. And second of all, the reason I reached out to a mutual friend, Joyce, was because I'm just generally interested in the... ...consumer AI space, chat platforms in general. I think there's a lot of inference insights that we can get from that, as well as human psychology insights, kind of a weird blend of the two. And we also share a bit of a history as former finance people crossing over. I guess we can just kind of start it off with the origin story of Chai.

William [00:01:19]: Why decide working on a consumer AI platform rather than B2B SaaS? So just quickly touching on the background in finance. Sure. Originally, I'm from... I'm from the UK, born in London. And I was fortunate enough to go study economics at Cambridge. And I graduated in 2012. And at that time, everyone in the UK and everyone on my course, HFT, quant trading was really the big thing. It was like the big wave that was happening. So there was a lot of opportunity in that space. And throughout college, I'd sort of played poker. So I'd, you know, I dabbled as a professional poker player. And I was able to accumulate this sort of, you know, say $100,000 through playing poker. And at the time, as my friends would go work at companies like ChangeStreet or Citadel, I kind of did the maths. And I just thought, well, maybe if I traded my own capital, I'd probably come out ahead. I'd make more money than just going to work at ChangeStreet.

swyx [00:02:20]: With 100k base as capital?

William [00:02:22]: Yes, yes. That's not a lot. Well, it depends what strategies you're doing. And, you know, there is an advantage. There's an advantage to being small, right? Because there are, if you have a 10... Strategies that don't work in size. Exactly, exactly. So if you have a fund of $10 million, if you find a little anomaly in the market that you might be able to make 100k a year from, that's a 1% return on your 10 million fund. If your fund is 100k, that's 100% return, right? So being small, in some sense, was an advantage. So started off, and the, taught myself Python, and machine learning was like the big thing as well. Machine learning had really, it was the first, you know, big time machine learning was being used for image recognition, neural networks come out, you get dropout. And, you know, so this, this was the big thing that's going on at the time. So I probably spent my first three years out of Cambridge, just building neural networks, building random forests to try and predict asset prices, right, and then trade that using my own money. And that went well. And, you know, if you if you start something, and it goes well, you You try and hire more people. And the first people that came to mind was the talented people I went to college with. And so I hired some friends. And that went well and hired some more. And eventually, I kind of ran out of friends to hire. And so that was when I formed the company. And from that point on, we had our ups and we had our downs. And that was a whole long story and journey in itself. But after doing that for about eight or nine years, on my 30th birthday, which was four years ago now, I kind of took a step back to just evaluate my life, right? This is what one does when one turns 30. You know, I just heard it. I hear you. And, you know, I looked at my 20s and I loved it. It was a really special time. I was really lucky and fortunate to have worked with this amazing team, been successful, had a lot of hard times. And through the hard times, learned wisdom and then a lot of success and, you know, was able to enjoy it. And so the company was making about five million pounds a year. And it was just me and a team of, say, 15, like, Oxford and Cambridge educated mathematicians and physicists. It was like the real dream that you'd have if you wanted to start a quant trading firm. It was like...

swyx [00:04:40]: Your own, all your own money?

William [00:04:41]: Yeah, exactly. It was all the team's own money. We had no customers complaining to us about issues. There's no investors, you know, saying, you know, they don't like the risk that we're taking. We could. We could really run the thing exactly as we wanted it. It's like Susquehanna or like Rintec. Yeah, exactly. Yeah. And they're the companies that we would kind of look towards as we were building that thing out. But on my 30th birthday, I look and I say, OK, great. This thing is making as much money as kind of anyone would really need. And I thought, well, what's going to happen if we keep going in this direction? And it was clear that we would never have a kind of a big, big impact on the world. We can enrich ourselves. We can make really good money. Everyone on the team would be paid very, very well. Presumably, I can make enough money to buy a yacht or something. But this stuff wasn't that important to me. And so I felt a sort of obligation that if you have this much talent and if you have a talented team, especially as a founder, you want to be putting all that talent towards a good use. I looked at the time of like getting into crypto and I had a really strong view on crypto, which was that as far as a gambling device. This is like the most fun form of gambling invented in like ever super fun, I thought as a way to evade monetary regulations and banking restrictions. I think it's also absolutely amazing. So it has two like killer use cases, not so much banking the unbanked, but everything else, but everything else to do with like the blockchain and, and you know, web, was it web 3.0 or web, you know, that I, that didn't, it didn't really make much sense. And so instead of going into crypto, which I thought, even if I was successful, I'd end up in a lot of trouble. I thought maybe it'd be better to build something that governments wouldn't have a problem with. I knew that LLMs were like a thing. I think opening. I had said they hadn't released GPT-3 yet, but they'd said GPT-3 is so powerful. We can't release it to the world or something. Was it GPT-2? And then I started interacting with, I think Google had open source, some language models. They weren't necessarily LLMs, but they, but they were. But yeah, exactly. So I was able to play around with, but nowadays so many people have interacted with the chat GPT, they get it, but it's like the first time you, you can just talk to a computer and it talks back. It's kind of a special moment and you know, everyone who's done that goes like, wow, this is how it should be. Right. It should be like, rather than having to type on Google and search, you should just be able to ask Google a question. When I saw that I read the literature, I kind of came across the scaling laws and I think even four years ago. All the pieces of the puzzle were there, right? Google had done this amazing research and published, you know, a lot of it. Open AI was still open. And so they'd published a lot of their research. And so you really could be fully informed on, on the state of AI and where it was going. And so at that point I was confident enough, it was worth a shot. I think LLMs are going to be the next big thing. And so that's the thing I want to be building in, in that space. And I thought what's the most impactful product I can possibly build. And I thought it should be a platform. So I myself love platforms. I think they're fantastic because they open up an ecosystem where anyone can contribute to it. Right. So if you think of a platform like a YouTube, instead of it being like a Hollywood situation where you have to, if you want to make a TV show, you have to convince Disney to give you the money to produce it instead, anyone in the world can post any content they want to YouTube. And if people want to view it, the algorithm is going to promote it. Nowadays. You can look at creators like Mr. Beast or Joe Rogan. They would have never have had that opportunity unless it was for this platform. Other ones like Twitter's a great one, right? But I would consider Wikipedia to be a platform where instead of the Britannica encyclopedia, which is this, it's like a monolithic, you get all the, the researchers together, you get all the data together and you combine it in this, in this one monolithic source. Instead. You have this distributed thing. You can say anyone can host their content on Wikipedia. Anyone can contribute to it. And anyone can maybe their contribution is they delete stuff. When I was hearing like the kind of the Sam Altman and kind of the, the Muskian perspective of AI, it was a very kind of monolithic thing. It was all about AI is basically a single thing, which is intelligence. Yeah. Yeah. The more intelligent, the more compute, the more intelligent, and the more and better AI researchers, the more intelligent, right? They would speak about it as a kind of erased, like who can get the most data, the most compute and the most researchers. And that would end up with the most intelligent AI. But I didn't believe in any of that. I thought that's like the total, like I thought that perspective is the perspective of someone who's never actually done machine learning. Because with machine learning, first of all, you see that the performance of the models follows an S curve. So it's not like it just goes off to infinity, right? And the, the S curve, it kind of plateaus around human level performance. And you can look at all the, all the machine learning that was going on in the 2010s, everything kind of plateaued around the human level performance. And we can think about the self-driving car promises, you know, how Elon Musk kept saying the self-driving car is going to happen next year, it's going to happen next, next year. Or you can look at the image recognition, the speech recognition. You can look at. All of these things, there was almost nothing that went superhuman, except for something like AlphaGo. And we can speak about why AlphaGo was able to go like super superhuman. So I thought the most likely thing was going to be this, I thought it's not going to be a monolithic thing. That's like an encyclopedia Britannica. I thought it must be a distributed thing. And I actually liked to look at the world of finance for what I think a mature machine learning ecosystem would look like. So, yeah. So finance is a machine learning ecosystem because all of these quant trading firms are running machine learning algorithms, but they're running it on a centralized platform like a marketplace. And it's not the case that there's one giant quant trading company of all the data and all the quant researchers and all the algorithms and compute, but instead they all specialize. So one will specialize on high frequency training. Another will specialize on mid frequency. Another one will specialize on equity. Another one will specialize. And I thought that's the way the world works. That's how it is. And so there must exist a platform where a small team can produce an AI for a unique purpose. And they can iterate and build the best thing for that, right? And so that was the vision for Chai. So we wanted to build a platform for LLMs.

Alessio [00:11:36]: That's kind of the maybe inside versus contrarian view that led you to start the company. Yeah. And then what was maybe the initial idea maze? Because if somebody told you that was the Hugging Face founding story, people might believe it. It's kind of like a similar ethos behind it. How did you land on the product feature today? And maybe what were some of the ideas that you discarded that initially you thought about?

William [00:11:58]: So the first thing we built, it was fundamentally an API. So nowadays people would describe it as like agents, right? But anyone could write a Python script. They could submit it to an API. They could send it to the Chai backend and we would then host this code and execute it. So that's like the developer side of the platform. On their Python script, the interface was essentially text in and text out. An example would be the very first bot that I created. I think it was a Reddit news bot. And so it would first, it would pull the popular news. Then it would prompt whatever, like I just use some external API for like Burr or GPT-2 or whatever. Like it was a very, very small thing. And then the user could talk to it. So you could say to the bot, hi bot, what's the news today? And it would say, this is the top stories. And you could chat with it. Now four years later, that's like perplexity or something. That's like the, right? But back then the models were first of all, like really, really dumb. You know, they had an IQ of like a four year old. And users, there really wasn't any demand or any PMF for interacting with the news. So then I was like, okay. Um. So let's make another one. And I made a bot, which was like, you could talk to it about a recipe. So you could say, I'm making eggs. Like I've got eggs in my fridge. What should I cook? And it'll say, you should make an omelet. Right. There was no PMF for that. No one used it. And so I just kept creating bots. And so every single night after work, I'd be like, okay, I like, we have AI, we have this platform. I can create any text in textile sort of agent and put it on the platform. And so we just create stuff night after night. And then all the coders I knew, I would say, yeah, this is what we're going to do. And then I would say to them, look, there's this platform. You can create any like chat AI. You should put it on. And you know, everyone's like, well, chatbots are super lame. We want absolutely nothing to do with your chatbot app. No one who knew Python wanted to build on it. I'm like trying to build all these bots and no consumers want to talk to any of them. And then my sister who at the time was like just finishing college or something, I said to her, I was like, if you want to learn Python, you should just submit a bot for my platform. And she, she built a therapy for me. And I was like, okay, cool. I'm going to build a therapist bot. And then the next day I checked the performance of the app and I'm like, oh my God, we've got 20 active users. And they spent, they spent like an average of 20 minutes on the app. I was like, oh my God, what, what bot were they speaking to for an average of 20 minutes? And I looked and it was the therapist bot. And I went, oh, this is where the PMF is. There was no demand for, for recipe help. There was no demand for news. There was no demand for dad jokes or pub quiz or fun facts or what they wanted was they wanted the therapist bot. the time I kind of reflected on that and I thought, well, if I want to consume news, the most fun thing, most fun way to consume news is like Twitter. It's not like the value of there being a back and forth, wasn't that high. Right. And I thought if I need help with a recipe, I actually just go like the New York times has a good recipe section, right? It's not actually that hard. And so I just thought the thing that AI is 10 X better at is a sort of a conversation right. That's not intrinsically informative, but it's more about an opportunity. You can say whatever you want. You're not going to get judged. If it's 3am, you don't have to wait for your friend to text back. It's like, it's immediate. They're going to reply immediately. You can say whatever you want. It's judgment-free and it's much more like a playground. It's much more like a fun experience. And you could see that if the AI gave a person a compliment, they would love it. It's much easier to get the AI to give you a compliment than a human. From that day on, I said, okay, I get it. Humans want to speak to like humans or human like entities and they want to have fun. And that was when I started to look less at platforms like Google. And I started to look more at platforms like Instagram. And I was trying to think about why do people use Instagram? And I could see that I think Chai was, was filling the same desire or the same drive. If you go on Instagram, typically you want to look at the faces of other humans, or you want to hear about other people's lives. So if it's like the rock is making himself pancakes on a cheese plate. You kind of feel a little bit like you're the rock's friend, or you're like having pancakes with him or something, right? But if you do it too much, you feel like you're sad and like a lonely person, but with AI, you can talk to it and tell it stories and tell you stories, and you can play with it for as long as you want. And you don't feel like you're like a sad, lonely person. You feel like you actually have a friend.

Alessio [00:16:29]: And what, why is that? Do you have any insight on that from using it?

William [00:16:33]: I think it's just the human psychology. I think it's just the idea that, with old school social media. You're just consuming passively, right? So you'll just swipe. If I'm watching TikTok, just like swipe and swipe and swipe. And even though I'm getting the dopamine of like watching an engaging video, there's this other thing that's building my head, which is like, I'm feeling lazier and lazier and lazier. And after a certain period of time, I'm like, man, I just wasted 40 minutes. I achieved nothing. But with AI, because you're interacting, you feel like you're, it's not like work, but you feel like you're participating and contributing to the thing. You don't feel like you're just. Consuming. So you don't have a sense of remorse basically. And you know, I think on the whole people, the way people talk about, try and interact with the AI, they speak about it in an incredibly positive sense. Like we get people who say they have eating disorders saying that the AI helps them with their eating disorders. People who say they're depressed, it helps them through like the rough patches. So I think there's something intrinsically healthy about interacting that TikTok and Instagram and YouTube doesn't quite tick. From that point on, it was about building more and more kind of like human centric AI for people to interact with. And I was like, okay, let's make a Kanye West bot, right? And then no one wanted to talk to the Kanye West bot. And I was like, ah, who's like a cool persona for teenagers to want to interact with. And I was like, I was trying to find the influencers and stuff like that, but no one cared. Like they didn't want to interact with the, yeah. And instead it was really just the special moment was when we said the realization that developers and software engineers aren't interested in building this sort of AI, but the consumers are right. And rather than me trying to guess every day, like what's the right bot to submit to the platform, why don't we just create the tools for the users to build it themselves? And so nowadays this is like the most obvious thing in the world, but when Chai first did it, it was not an obvious thing at all. Right. Right. So we took the API for let's just say it was, I think it was GPTJ, which was this 6 billion parameter open source transformer style LLM. We took GPTJ. We let users create the prompt. We let users select the image and we let users choose the name. And then that was the bot. And through that, they could shape the experience, right? So if they said this bot's going to be really mean, and it's going to be called like bully in the playground, right? That was like a whole category that I never would have guessed. Right. People love to fight. They love to have a disagreement, right? And then they would create, there'd be all these romantic archetypes that I didn't know existed. And so as the users could create the content that they wanted, that was when Chai was able to, to get this huge variety of content and rather than appealing to, you know, 1% of the population that I'd figured out what they wanted, you could appeal to a much, much broader thing. And so from that moment on, it was very, very crystal clear. It's like Chai, just as Instagram is this social media platform that lets people create images and upload images, videos and upload that, Chai was really about how can we let the users create this experience in AI and then share it and interact and search. So it's really, you know, I say it's like a platform for social AI.

Alessio [00:20:00]: Where did the Chai name come from? Because you started the same path. I was like, is it character AI shortened? You started at the same time, so I was curious. The UK origin was like the second, the Chai.

William [00:20:15]: We started way before character AI. And there's an interesting story that Chai's numbers were very, very strong, right? So I think in even 20, I think late 2022, was it late 2022 or maybe early 2023? Chai was like the number one AI app in the app store. So we would have something like 100,000 daily active users. And then one day we kind of saw there was this website. And we were like, oh, this website looks just like Chai. And it was the character AI website. And I think that nowadays it's, I think it's much more common knowledge that when they left Google with the funding, I think they knew what was the most trending, the number one app. And I think they sort of built that. Oh, you found the people.

swyx [00:21:03]: You found the PMF for them.

William [00:21:04]: We found the PMF for them. Exactly. Yeah. So I worked a year very, very hard. And then they, and then that was when I learned a lesson, which is that if you're VC backed and if, you know, so Chai, we'd kind of ran, we'd got to this point, I was the only person who'd invested. I'd invested maybe 2 million pounds in the business. And you know, from that, we were able to build this thing, get to say a hundred thousand daily active users. And then when character AI came along, the first version, we sort of laughed. We were like, oh man, this thing sucks. Like they don't know what they're building. They're building the wrong thing anyway, but then I saw, oh, they've raised a hundred million dollars. Oh, they've raised another hundred million dollars. And then our users started saying, oh guys, your AI sucks. Cause we were serving a 6 billion parameter model, right? How big was the model that character AI could afford to serve, right? So we would be spending, let's say we would spend a dollar per per user, right? Over the, the, you know, the entire lifetime.

swyx [00:22:01]: A dollar per session, per chat, per month? No, no, no, no.

William [00:22:04]: Let's say we'd get over the course of the year, we'd have a million users and we'd spend a million dollars on the AI throughout the year. Right. Like aggregated. Exactly. Exactly. Right. They could spend a hundred times that. So people would say, why is your AI much dumber than character AIs? And then I was like, oh, okay, I get it. This is like the Silicon Valley style, um, hyper scale business. And so, yeah, we moved to Silicon Valley and, uh, got some funding and iterated and built the flywheels. And, um, yeah, I, I'm very proud that we were able to compete with that. Right. So, and I think the reason we were able to do it was just customer obsession. And it's similar, I guess, to how deep seek have been able to produce such a compelling model when compared to someone like an open AI, right? So deep seek, you know, their latest, um, V2, yeah, they claim to have spent 5 million training it.

swyx [00:22:57]: It may be a bit more, but, um, like, why are you making it? Why are you making such a big deal out of this? Yeah. There's an agenda there. Yeah. You brought up deep seek. So we have to ask you had a call with them.

William [00:23:07]: We did. We did. We did. Um, let me think what to say about that. I think for one, they have an amazing story, right? So their background is again in finance.

swyx [00:23:16]: They're the Chinese version of you. Exactly.

William [00:23:18]: Well, there's a lot of similarities. Yes. Yes. I have a great affinity for companies which are like, um, founder led, customer obsessed and just try and build something great. And I think what deep seek have achieved. There's quite special is they've got this amazing inference engine. They've been able to reduce the size of the KV cash significantly. And then by being able to do that, they're able to significantly reduce their inference costs. And I think with kind of with AI, people get really focused on like the kind of the foundation model or like the model itself. And they sort of don't pay much attention to the inference. To give you an example with Chai, let's say a typical user session is 90 minutes, which is like, you know, is very, very long for comparison. Let's say the average session length on TikTok is 70 minutes. So people are spending a lot of time. And in that time they're able to send say 150 messages. That's a lot of completions, right? It's quite different from an open AI scenario where people might come in, they'll have a particular question in mind. And they'll ask like one question. And a few follow up questions, right? So because they're consuming, say 30 times as many requests for a chat, or a conversational experience, you've got to figure out how to how to get the right balance between the cost of that and the quality. And so, you know, I think with AI, it's always been the case that if you want a better experience, you can throw compute at the problem, right? So if you want a better model, you can just make it bigger. If you want it to remember better, give it a longer context. And now, what open AI is doing to great fanfare is with projection sampling, you can generate many candidates, right? And then with some sort of reward model or some sort of scoring system, you can serve the most promising of these many candidates. And so that's kind of scaling up on the inference time compute side of things. And so for us, it doesn't make sense to think of AI is just the absolute performance. So. But what we're seeing, it's like the MML you score or the, you know, any of these benchmarks that people like to look at, if you just get that score, it doesn't really tell tell you anything. Because it's really like progress is made by improving the performance per dollar. And so I think that's an area where deep seek have been able to form very, very well, surprisingly so. And so I'm very interested in what Lama four is going to look like. And if they're able to sort of match what deep seek have been able to achieve with this performance per dollar gain.

Alessio [00:25:59]: Before we go into the inference, some of the deeper stuff, can you give people an overview of like some of the numbers? So I think last I checked, you have like 1.4 million daily active now. It's like over 22 million of revenue. So it's quite a business.

William [00:26:12]: Yeah, I think we grew by a factor of, you know, users grew by a factor of three last year. Revenue over doubled. You know, it's very exciting. We're competing with some really big, really well funded companies. Character AI got this, I think it was almost a $3 billion valuation. And they have 5 million DAU is a number that I last heard. Torquay, which is a Chinese built app owned by a company called Minimax. They're incredibly well funded. And these companies didn't grow by a factor of three last year. Right. And so when you've got this company and this team that's able to keep building something that gets users excited, and they want to tell their friend about it, and then they want to come and they want to stick on the platform. I think that's very special. And so last year was a great year for the team. And yeah, I think the numbers reflect the hard work that we put in. And then fundamentally, the quality of the app, the quality of the content, the quality of the content, the quality of the content, the quality of the content, the quality of the content. AI is the quality of the experience that you have. You actually published your DAU growth chart, which is unusual. And I see some inflections. Like, it's not just a straight line. There's some things that actually inflect. Yes. What were the big ones? Cool. That's a great, great, great question. Let me think of a good answer. I'm basically looking to annotate this chart, which doesn't have annotations on it. Cool. The first thing I would say is this is, I think the most important thing to know about success is that success is born out of failures. Right? Through failures that we learn. You know, if you think something's a good idea, and you do and it works, great, but you didn't actually learn anything, because everything went exactly as you imagined. But if you have an idea, you think it's going to be good, you try it, and it fails. There's a gap between the reality and expectation. And that's an opportunity to learn. The flat periods, that's us learning. And then the up periods is that's us reaping the rewards of that. So I think the big, of the growth shot of just 2024, I think the first thing that really kind of put a dent in our growth was our backend. So we just reached this scale. So we'd, from day one, we'd built on top of Google's GCP, which is Google's cloud platform. And they were fantastic. We used them when we had one daily active user, and they worked pretty good all the way up till we had about 500,000. It was never the cheapest, but from an engineering perspective, man, that thing scaled insanely good. Like, not Vertex? Not Vertex. Like GKE, that kind of stuff? We use Firebase. So we use Firebase. I'm pretty sure we're the biggest user ever on Firebase. That's expensive. Yeah, we had calls with engineers, and they're like, we wouldn't recommend using this product beyond this point, and you're 3x over that. So we pushed Google to their absolute limits. You know, it was fantastic for us, because we could focus on the AI. We could focus on just adding as much value as possible. But then what happened was, after 500,000, just the thing, the way we were using it, and it would just, it wouldn't scale any further. And so we had a really, really painful, at least three-month period, as we kind of migrated between different services, figuring out, like, what requests do we want to keep on Firebase, and what ones do we want to move on to something else? And then, you know, making mistakes. And learning things the hard way. And then after about three months, we got that right. So that, we would then be able to scale to the 1.5 million DAE without any further issues from the GCP. But what happens is, if you have an outage, new users who go on your app experience a dysfunctional app, and then they're going to exit. And so your next day, the key metrics that the app stores track are going to be something like retention rates. And so your next day, the key metrics that the app stores track are going to be something like retention rates. Money spent, and the star, like, the rating that they give you. In the app store. In the app store, yeah. Tyranny. So if you're ranked top 50 in entertainment, you're going to acquire a certain rate of users organically. If you go in and have a bad experience, it's going to tank where you're positioned in the algorithm. And then it can take a long time to kind of earn your way back up, at least if you wanted to do it organically. If you throw money at it, you can jump to the top. And I could talk about that. But broadly speaking, if we look at 2024, the first kink in the graph was outages due to hitting 500k DAU. The backend didn't want to scale past that. So then we just had to do the engineering and build through it. Okay, so we built through that, and then we get a little bit of growth. And so, okay, that's feeling a little bit good. I think the next thing, I think it's, I'm not going to lie, I have a feeling that when Character AI got... I was thinking. I think so. I think... So the Character AI team fundamentally got acquired by Google. And I don't know what they changed in their business. I don't know if they dialed down that ad spend. Products don't change, right? Products just what it is. I don't think so. Yeah, I think the product is what it is. It's like maintenance mode. Yes. I think the issue that people, you know, some people may think this is an obvious fact, but running a business can be very competitive, right? Because other businesses can see what you're doing, and they can imitate you. And then there's this... There's this question of, if you've got one company that's spending $100,000 a day on advertising, and you've got another company that's spending zero, if you consider market share, and if you're considering new users which are entering the market, the guy that's spending $100,000 a day is going to be getting 90% of those new users. And so I have a suspicion that when the founders of Character AI left, they dialed down their spending on user acquisition. And I think that kind of gave oxygen to like the other apps. And so Chai was able to then start growing again in a really healthy fashion. I think that's kind of like the second thing. I think a third thing is we've really built a great data flywheel. Like the AI team sort of perfected their flywheel, I would say, in end of Q2. And I could speak about that at length. But fundamentally, the way I would describe it is when you're building anything in life, you need to be able to evaluate it. And through evaluation, you can iterate, we can look at benchmarks, and we can say the issues with benchmarks and why they may not generalize as well as one would hope in the challenges of working with them. But something that works incredibly well is getting feedback from humans. And so we built this thing where anyone can submit a model to our developer backend, and it gets put in front of 5000 users, and the users can rate it. And we can then have a really accurate ranking of like which model, or users finding more engaging or more entertaining. And it gets, you know, it's at this point now, where every day we're able to, I mean, we evaluate between 20 and 50 models, LLMs, every single day, right. So even though we've got only got a team of, say, five AI researchers, they're able to iterate a huge quantity of LLMs, right. So our team ships, let's just say minimum 100 LLMs a week is what we're able to iterate through. Now, before that moment in time, we might iterate through three a week, we might, you know, there was a time when even doing like five a month was a challenge, right? By being able to change the feedback loops to the point where it's not, let's launch these three models, let's do an A-B test, let's assign, let's do different cohorts, let's wait 30 days to see what the day 30 retention is, which is the kind of the, if you're doing an app, that's like A-B testing 101 would be, do a 30-day retention test, assign different treatments to different cohorts and come back in 30 days. So that's insanely slow. That's just, it's too slow. And so we were able to get that 30-day feedback loop all the way down to something like three hours. And when we did that, we could really, really, really perfect techniques like DPO, fine tuning, prompt engineering, blending, rejection sampling, training a reward model, right, really successfully, like boom, boom, boom, boom, boom. And so I think in Q3 and Q4, we got, the amount of AI improvements we got was like astounding. It was getting to the point, I thought like how much more, how much more edge is there to be had here? But the team just could keep going and going and going. That was like number three for the inflection point.

swyx [00:34:53]: There's a fourth?

William [00:34:54]: The important thing about the third one is if you go on our Reddit or you talk to users of AI, there's like a clear date. It's like somewhere in October or something. The users, they flipped. Before October, the users... The users would say character AI is better than you, for the most part. Then from October onwards, they would say, wow, you guys are better than character AI. And that was like a really clear positive signal that we'd sort of done it. And I think people, you can't cheat consumers. You can't trick them. You can't bullshit them. They know, right? If you're going to spend 90 minutes on a platform, and with apps, there's the barriers to switching is pretty low. Like you can try character AI, you can't cheat consumers. You can't cheat them. You can't cheat them. You can't cheat AI for a day. If you get bored, you can try Chai. If you get bored of Chai, you can go back to character. So the users, the loyalty is not strong, right? What keeps them on the app is the experience. If you deliver a better experience, they're going to stay and they can tell. So that was the fourth one was we were fortunate enough to get this hire. He was hired one really talented engineer. And then they said, oh, at my last company, we had a head of growth. He was really, really good. And he was the head of growth for ByteDance for two years. Would you like to speak to him? And I was like, yes. Yes, I think I would. And so I spoke to him. And he just blew me away with what he knew about user acquisition. You know, it was like a 3D chess

swyx [00:36:21]: sort of thing. You know, as much as, as I know about AI. Like ByteDance as in TikTok US. Yes.

William [00:36:26]: Not ByteDance as other stuff. Yep. He was interviewing us as we were interviewing him. Right. And so pick up options. Yeah, exactly. And so he was kind of looking at our metrics. And he was like, I saw him get really excited when he said, guys, you've got a million daily active users and you've done no advertising. I said, correct. And he was like, that's unheard of. He's like, I've never heard of anyone doing that. And then he started looking at our metrics. And he was like, if you've got all of this organically, if you start spending money, this is going to be very exciting. I was like, let's give it a go. So then he came in, we've just started ramping up the user acquisition. So that looks like spending, you know, let's say we're spending, we started spending $20,000 a day, it looked very promising than 20,000. Right now we're spending $40,000 a day on user acquisition. That's still only half of what like character AI or talkie may be spending. But from that, it's sort of, we were growing at a rate of maybe say, 2x a year. And that got us growing at a rate of 3x a year. So I'm growing, I'm evolving more and more to like a Silicon Valley style hyper growth, like, you know, you build something decent, and then you can

swyx [00:37:33]: slap on a huge... You did the important thing, you did the product first.

William [00:37:36]: Of course, but then you can slap on like, like the rocket or the jet engine or something, which is just this cash in, you pour in as much cash, you buy a lot of ads, and your growth is faster.

swyx [00:37:48]: Not to, you know, I'm just kind of curious what's working right now versus what surprisingly

William [00:37:52]: doesn't work. Oh, there's a long, long list of surprising stuff that doesn't work. Yeah. The surprising thing, like the most surprising thing, what doesn't work is almost everything doesn't work. That's what's surprising. And I'll give you an example. So like a year and a half ago, I was working at a company, we were super excited by audio. I was like, audio is going to be the next killer feature, we have to get in the app. And I want to be the first. So everything Chai does, I want us to be the first. We may not be the company that's strongest at execution, but we can always be the

swyx [00:38:22]: most innovative. Interesting. Right? So we can... You're pretty strong at execution.

William [00:38:26]: We're much stronger, we're much stronger. A lot of the reason we're here is because we were first. If we launched today, it'd be so hard to get the traction. Because it's like to get the flywheel, to get the users, to build a product people are excited about. If you're first, people are naturally excited about it. But if you're fifth or 10th, man, you've got to be

swyx [00:38:46]: insanely good at execution. So you were first with voice? We were first. We were first. I only know

William [00:38:51]: when character launched voice. They launched it, I think they launched it at least nine months after us. Okay. Okay. But the team worked so hard for it. At the time we did it, latency is a huge problem. Cost is a huge problem. Getting the right quality of the voice is a huge problem. Right? Then there's this user interface and getting the right user experience. Because you don't just want it to start blurting out. Right? You want to kind of activate it. But then you don't have to keep pressing a button every single time. There's a lot that goes into getting a really smooth audio experience. So we went ahead, we invested the three months, we built it all. And then when we did the A-B test, there was like, no change in any of the numbers. And I was like, this can't be right, there must be a bug. And we spent like a week just checking everything, checking again, checking again. And it was like, the users just did not care. And it was something like only 10 or 15% of users even click the button to like, they wanted to engage the audio. And they would only use it for 10 or 15% of the time. So if you do the math, if it's just like something that one in seven people use it for one seventh of their time. You've changed like 2% of the experience. So even if that that 2% of the time is like insanely good, it doesn't translate much when you look at the retention, when you look at the engagement, and when you look at the monetization rates. So audio did not have a big impact. I'm pretty big on audio. But yeah, I like it too. But it's, you know, so a lot of the stuff which I do, I'm a big, you can have a theory. And you resist. Yeah. Exactly, exactly. So I think if you want to make audio work, it has to be a unique, compelling, exciting experience that they can't have anywhere else.

swyx [00:40:37]: It could be your models, which just weren't good enough.

William [00:40:39]: No, no, no, they were great. Oh, yeah, they were very good. it was like, it was kind of like just the, you know, if you listen to like an audible or Kindle, or something like, you just hear this voice. And it's like, you don't go like, wow, this is this is special, right? It's like a convenience thing. But the idea is that if you can, if Chai is the only platform, like, let's say you have a Mr. Beast, and YouTube is the only platform you can use to make audio work, then you can watch a Mr. Beast video. And it's the most engaging, fun video that you want to watch, you'll go to a YouTube. And so it's like for audio, you can't just put the audio on there. And people go, oh, yeah, it's like 2% better. Or like, 5% of users think it's 20% better, right? It has to be something that the majority of people, for the majority of the experience, go like, wow, this is a big deal. That's the features you need to be shipping. If it's not going to appeal to the majority of people, for the majority of the experience, and it's not a big deal, it's not going to move you. Cool. So you killed it. I don't see it anymore. Yep. So I love this. The longer, it's kind of cheesy, I guess, but the longer I've been working at Chai, and I think the team agrees with this, all the platitudes, at least I thought they were platitudes, that you would get from like the Steve Jobs, which is like, build something insanely great, right? Or be maniacally focused, or, you know, the most important thing is saying no to, not to work on. All of these sort of lessons, they just are like painfully true. They're painfully true. So now I'm just like, everything I say, I'm either quoting Steve Jobs or Zuckerberg. I'm like, guys, move fast and break free.

swyx [00:42:10]: You've jumped the Apollo to cool it now.

William [00:42:12]: Yeah, it's just so, everything they said is so, so true. The turtle neck. Yeah, yeah, yeah. Everything is so true.

swyx [00:42:18]: This last question on my side, and I want to pass this to Alessio, is on just, just multi-modality in general. This actually comes from Justine Moore from A16Z, who's a friend of ours. And a lot of people are trying to do voice image video for AI companions. Yes. You just said voice didn't work. Yep. What would make you revisit?

William [00:42:36]: So Steve Jobs, he was very, listen, he was very, very clear on this. There's a habit of engineers who, once they've got some cool technology, they want to find a way to package up the cool technology and sell it to consumers, right? That does not work. So you're free to try and build a startup where you've got your cool tech and you want to find someone to sell it to. That's not what we do at Chai. At Chai, we start with the consumer. What does the consumer want? What is their problem? And how do we solve it? So right now, the number one problems for the users, it's not the audio. That's not the number one problem. It's not the image generation either. That's not their problem either. The number one problem for users in AI is this. All the AI is being generated by middle-aged men in Silicon Valley, right? That's all the content. You're interacting with this AI. You're speaking to it for 90 minutes on average. It's being trained by middle-aged men. The guys out there, they're out there. They're talking to you. They're talking to you. They're like, oh, what should the AI say in this situation, right? What's funny, right? What's cool? What's boring? What's entertaining? That's not the way it should be. The way it should be is that the users should be creating the AI, right? And so the way I speak about it is this. Chai, we have this AI engine in which sits atop a thin layer of UGC. So the thin layer of UGC is absolutely essential, right? It's just prompts. But it's just prompts. It's just an image. It's just a name. It's like we've done 1% of what we could do. So we need to keep thickening up that layer of UGC. It must be the case that the users can train the AI. And if reinforcement learning is powerful and important, they have to be able to do that. And so it's got to be the case that there exists, you know, I say to the team, just as Mr. Beast is able to spend 100 million a year or whatever it is on his production company, and he's got a team building the content, the Mr. Beast company is able to spend 100 million a year on his production company. And he's got a team building the content, which then he shares on the YouTube platform. Until there's a team that's earning 100 million a year or spending 100 million on the content that they're producing for the Chai platform, we're not finished, right? So that's the problem. That's what we're excited to build. And getting too caught up in the tech, I think is a fool's errand. It does not work.

Alessio [00:44:52]: As an aside, I saw the Beast Games thing on Amazon Prime. It's not doing well. And I'm

swyx [00:44:56]: curious. It's kind of like, I mean, the audience reading is high. The run-to-meet-all sucks, but the audience reading is high.

Alessio [00:45:02]: But it's not like in the top 10. I saw it dropped off of like the... Oh, okay. Yeah, that one I don't know. I'm curious, like, you know, it's kind of like similar content, but different platform. And then going back to like, some of what you were saying is like, you know, people come to Chai

William [00:45:13]: expecting some type of content. Yeah, I think it's something that's interesting to discuss is like, is moats. And what is the moat? And so, you know, if you look at a platform like YouTube, the moat, I think is in first is really is in the ecosystem. And the ecosystem, is comprised of you have the content creators, you have the users, the consumers, and then you have the algorithms. And so this, this creates a sort of a flywheel where the algorithms are able to be trained on the users, and the users data, the recommend systems can then feed information to the content creators. So Mr. Beast, he knows which thumbnail does the best. He knows the first 10 seconds of the video has to be this particular way. And so his content is super optimized for the YouTube platform. So that's why it doesn't do well on Amazon. If he wants to do well on Amazon, how many videos has he created on the YouTube platform? By thousands, 10s of 1000s, I guess, he needs to get those iterations in on the Amazon. So at Chai, I think it's all about how can we get the most compelling, rich user generated content, stick that on top of the AI engine, the recommender systems, in such that we get this beautiful data flywheel, more users, better recommendations, more creative, more content, more users.

Alessio [00:46:34]: You mentioned the algorithm, you have this idea of the Chaiverse on Chai, and you have your own kind of like LMSYS-like ELO system. Yeah, what are things that your models optimize for, like your users optimize for, and maybe talk about how you build it, how people submit models?

William [00:46:49]: So Chaiverse is what I would describe as a developer platform. More often when we're speaking about Chai, we're thinking about the Chai app. And the Chai app is really this product for consumers. And so consumers can come on the Chai app, they can come on the Chai app, they can come on the Chai app, they can interact with our AI, and they can interact with other UGC. And it's really just these kind of bots. And it's a thin layer of UGC. Okay. Our mission is not to just have a very thin layer of UGC. Our mission is to have as much UGC as possible. So we must have, I don't want people at Chai training the AI. I want people, not middle aged men, building AI. I want everyone building the AI, as many people building the AI as possible. Okay, so what we built was we built Chaiverse. And Chaiverse is kind of, it's kind of like a prototype, is the way to think about it. And it started with this, this observation that, well, how many models get submitted into Hugging Face a day? It's hundreds, it's hundreds, right? So there's hundreds of LLMs submitted each day. Now consider that, what does it take to build an LLM? It takes a lot of work, actually. It's like someone devoted several hours of compute, several hours of their time, prepared a data set, launched it, ran it, evaluated it, submitted it, right? So there's a lot of, there's a lot of, there's a lot of work that's going into that. So what we did was we said, well, why can't we host their models for them and serve them to users? And then what would that look like? The first issue is, well, how do you know if a model is good or not? Like, we don't want to serve users the crappy models, right? So what we would do is we would, I love the LMSYS style. I think it's really cool. It's really simple. It's a very intuitive thing, which is you simply present the users with two completions. You can say, look, this is from model one. This is from model two. This is from model three. This is from model A. This is from model B, which is better. And so if someone submits a model to Chaiverse, what we do is we spin up a GPU. We download the model. We're going to now host that model on this GPU. And we're going to start routing traffic to it. And we're going to send, we think it takes about 5,000 completions to get an accurate signal. That's roughly what LMSYS does. And from that, we're able to get an accurate ranking. And we're able to get an accurate ranking. And we're able to get an accurate ranking of which models are people finding entertaining and which models are not entertaining. If you look at the bottom 80%, they'll suck. You can just disregard them. They totally suck. Then when you get the top 20%, you know you've got a decent model, but you can break it down into more nuance. There might be one that's really descriptive. There might be one that's got a lot of personality to it. There might be one that's really illogical. Then the question is, well, what do you do with these top models? From that, you can do more sophisticated things. You can try and do like a routing thing where you say for a given user request, we're going to try and predict which of these end models that users enjoy the most. That turns out to be pretty expensive and not a huge source of like edge or improvement. Something that we love to do at Chai is blending, which is, you know, it's the simplest way to think about it is you're going to end up, and you're going to pretty quickly see you've got one model that's really smart, one model that's really funny. How do you get the user an experience that is both smart and funny? Well, just 50% of the requests, you can serve them the smart model, 50% of the requests, you serve them the funny model. Just a random 50%? Just a random, yeah. And then... That's blending? That's blending. You can do more sophisticated things on top of that, as in all things in life, but the 80-20 solution, if you just do that, you get a pretty powerful effect out of the gate. Random number generator. I think it's like the robustness of randomness. Random is a very powerful optimization technique, and it's a very robust thing. So you can explore a lot of the space very efficiently. There's one thing that's really, really important to share, and this is the most exciting thing for me, is after you do the ranking, you get an ELO score, and you can track a user's first join date, the first date they submit a model to Chaiverse, they almost always get a terrible ELO, right? So let's say the first submission they get an ELO of 1,100 or 1,000 or something, and you can see that they iterate and they iterate and iterate, and it will be like, no improvement, no improvement, no improvement, and then boom. Do you give them any data, or do you have to come up with this themselves? We do, we do, we do, we do. We try and strike a balance between giving them data that's very useful, you've got to be compliant with GDPR, which is like, you have to work very hard to preserve the privacy of users of your app. So we try to give them as much signal as possible, to be helpful. The minimum is we're just going to give you a score, right? That's the minimum. But that alone is people can optimize a score pretty well, because they're able to come up with theories, submit it, does it work? No. A new theory, does it work? No. And then boom, as soon as they figure something out, they keep it, and then they iterate, and then boom,

Alessio [00:51:46]: they figure something out, and they keep it. Last year, you had this post on your blog, cross-sourcing the lead to the 10 trillion parameter, AGI, and you call it a mixture of experts, recommenders. Yep. Any insights?

William [00:51:58]: Updated thoughts, 12 months later? I think the odds, the timeline for AGI has certainly been pushed out, right? Now, this is in, I'm a controversial person, I don't know, like, I just think... You don't believe in scaling laws, you think AGI is further away. I think it's an S-curve. I think everything's an S-curve. And I think that the models have proven to just be far worse at reasoning than people sort of thought. And I think whenever I hear people talk about LLMs as reasoning engines, I sort of cringe a bit. I don't think that's what they are. I think of them more as like a simulator. I think of them as like a, right? So they get trained to predict the next most likely token. It's like a physics simulation engine. So you get these like games where you can like construct a bridge, and you drop a car down, and then it predicts what should happen. And that's really what LLMs are doing. It's not so much that they're reasoning, it's more that they're just doing the most likely thing. So fundamentally, the ability for people to add in intelligence, I think is very limited. What most people would consider intelligence, I think the AI is not a crowdsourcing problem, right? Now with Wikipedia, Wikipedia crowdsources knowledge. It doesn't crowdsource intelligence. So it's a subtle distinction. AI is fantastic at knowledge. I think it's weak at intelligence. And a lot, it's easy to conflate the two because if you ask it a question and it gives you, you know, if you said, who was the seventh president of the United States, and it gives you the correct answer, I'd say, well, I don't know the answer to that. And you can conflate that with intelligence. But really, that's a question of knowledge. And knowledge is really this thing about saying, how can I store all of this information? And then how can I retrieve something that's relevant? Okay, they're fantastic at that. They're fantastic at storing knowledge and retrieving the relevant knowledge. They're superior to humans in that regard. And so I think we need to come up for a new word. How does one describe AI should contain more knowledge than any individual human? It should be more accessible than any individual human. That's a very powerful thing. That's super

swyx [00:54:07]: powerful. But what words do we use to describe that? We had a previous guest on Exa AI that does search. And he tried to coin super knowledge as the opposite of super intelligence.

William [00:54:20]: Exactly. I think super knowledge is a more accurate word for it.

swyx [00:54:24]: You can store more things than any human can.

William [00:54:26]: And you can retrieve it better than any human can as well. And I think it's those two things combined that's special. I think that thing will exist. That thing can be built. And I think you can start with something that's entertaining and fun. And I think, I often think it's like, look, it's going to be a 20 year journey. And we're in like, year four, or it's like the web. And this is like 1998 or something. You know, you've got a long, long way to go before the Amazon.coms are like these huge, multi trillion dollar businesses that every single person uses every day. And so AI today is very simplistic. And it's fundamentally the way we're using it, the flywheels, and this ability for how can everyone contribute to it to really magnify the value that it brings. Right now, like, I think it's a bit sad. It's like, right now you have big labs, I'm going to pick on open AI. And they kind of go to like these human labelers. And they say, we're going to pay you to just label this like subset of questions that we want to get a really high quality data set, then we're going to get like our own computers that are really powerful. And that's kind of like the thing. For me, it's so much like Encyclopedia Britannica. It's like insane. All the people that were interested in blockchain, it's like, well, this is this is what needs to be decentralized, you need to decentralize that thing. Because if you distribute it, people can generate way more data in a distributed fashion, way more, right? You need the incentive. Yeah, of course. Yeah. But I mean, the, the, that's kind of the exciting thing about Wikipedia was it's this understanding, like the incentives, you don't need money to incentivize people. You don't need dog coins. No. Sometimes, sometimes people get the satisfaction from just seeing the correct thing. Number go up. Yeah, yeah. I mean, you do pay money for Chai vs. Weed. We've, we've paid out over $100,000 to model creators. But do you know what we saw? It's not motivating. We saw that it didn't really make a difference. Like if they were submitting models at a certain rate, if you pay them a bunch of money, they didn't change the rate. What the money let them do was if they wanted to fine tune Alarma 70B on eight H100s overnight, if you give them money, then they can do it. Or you could give them compute. Yeah. So, so I think the most exciting person we ever saw from interacting with Chai, Chai vs. was we gave some kid who was like, like 17 years old, I think we gave him $1,000 and he spent all the money on buying a physical computer. And he took a picture of it and said, this is what I bought. And I'm going to be training more models with it. So that's why, that's why I love platforms.

swyx [00:57:00]: Should you hire him or?

William [00:57:02]: That's the temptation. Yeah. That's the temptation. But you want to keep the team small? No, no. As a platform, we can't just hire every good content creator. We've got to build the systems and the best content creator today isn't going to be the best content creator next year.

Alessio [00:57:14]: What about Eva? So you've talked about reasoning and knowledge. Most of the benchmarks that people use want to mimic reasoning. Yep. I want to register, I disagree on the reasoning, but we have to keep going. Yeah, I'm curious, like how, how do you think about the evals that matter to you?

swyx [00:57:29]: So yeah, like Elo cannot be the only eval. You must have internal evals. You mentioned evals.

William [00:57:34]: I think Elo is a fantastic north star and the reason for it, or like it's the main one we want to see go up because it's this human feedback. The humans know what they want. It's beautiful because when you come up with an eval, you're further removing yourself away from the true problem. Right? So whatever it is you're trying to optimize or figure out, you kind of have to, have to slice it. And then you've got this, it's like a snapshot. Like as soon as you saturate one eval, you need to figure out a new eval. But with, by saying to humans, just which is better, A or B, it's super robust. It's super generalizable. It just keeps, keeps scaling. So we've in the past used evals to get through a, to get through a blocker. I mean, a great example is, you know, is like having like a safety filter or something. Yeah. Where you want to make sure your models, because listen, users find, you'll be shocked the correlation between not family friendly content, whether that's just like swearing, like people find it funny when the AI swears. So if you have two completions, A or B, like if you give me any LLM, I can make it 20% funnier just by training it to throw in swear words. So the issue with that is it's like, how are we measuring like quality improvements? Are we measuring superficial improvements? Right. And this actually links back to the LLM sys. They did a style control.

swyx [00:58:54]: We actually had them on the podcast.

William [00:58:56]: Yeah. Yeah. And so that's the way I, I would rather just lean on human feedback and just continue to make that more and more robust and more and more useful. And, you know, you can say some people are like GPU poor and GPU rich. We're like, we're feedback rich. Like when you've got one and a half million people a day, we get as much feedback from humans as we want. So we're not in a position where we needed to have the evals very much. Yeah. And when we do, we saturate them pretty quick. So a safety one, you know, within a month, we don't need to use it anymore because it's sort of, it's, you know, the issue has been addressed.

swyx [00:59:29]: I think one problem I have, and this is a broader products question maybe, is that the ELOs apply to the whole user population. That's right. Clearly the user behavior, there's segments that have like, I'm a role play person, I'm a therapy person, I'm a not safe for work person. You don't split them?

William [00:59:44]: This is why I say like, I think we're in year four of like a 20 year thing where it's like, at the end of the day, I'm a role play person. And I think if we all go on like Spotify or like, imagine if Spotify only had the top five musicians, I think it would retain over 85% of its existing users. Yeah. Right. And I think if YouTube, if YouTube only kept the top five content creators, it would be enough for the vast majority of people. The thing I'm just trying to share here is there's one surprising thing about humans is their preferences are pretty correlated. What you find funny and entertaining, I find funny and entertaining, and he finds funny and entertaining. There might be degrees of variation in it, I might find it super funny, you might find it only slightly funny, but optimizing to a global works very, very well. And for segmentation to be really powerful, segmentation will work amazing if you found a comment super boring, and I found it super fun. If we could segment that, then that would unlock really powerful stuff. But unfortunately, that's not the shape of human behavior, right? It's like, I might rank it 10 out of 10 funny, you might rank it 7 out of 10 funny. And it's like, it doesn't give you... It doesn't give you as much space to play as you would hope. It's an element of the diversity of content that AI can produce right now, which is it's not as diverse as if you consider a platform like YouTube, you can watch a Mr. Beast video, that's totally different to a makeup tutorial. So there's enough diversity there where if you go on my YouTube feed, it is totally different to my sister's one. My sister's one, it's all like women, and if you go on mine, it's all like bald, middle-aged men, either talking about MMA or, right? I think with AI, it's still a bit too early for that degree of segmentation. So I think it all comes, the recommender systems, the personalization. But this is why I like the, don't start with the technology, start with the problem. The problem is UGC. We must give users the tools to build more variety and more engaging content.

swyx [01:01:42]: Yeah. I feel like there's... I was surprised at how thin it was when I tried out Chai. Yeah. It's very thin. Haven't you been tempted? Like there's this ecosystem of Cobalt, Silly Tavern, those guys. They have model cards. It seems like an industry standard almost. Yeah, agreed. Can I just import those? I don't think I want to say.

William [01:02:01]: Oh, you're already working on it. No, it's like, I remember when Chai meant, Chai, Silly Tavern, and like Cobalt, Cobalt AI is basically as old as Chai. So when Chai was, when we just existed, they just existed. And both of us were using GPT. Chai, yeah, yeah, yeah. And I remember very early on, I was like, these guys shouldn't even exist. Because if we build a good enough platform, they should just be posting their content on our platform.

swyx [01:02:28]: Yeah, but they're open source. No, exactly.

William [01:02:30]: That was what I learned. Eventually, I learned like they're, what they're excited about is slightly different from a typical consumer. My answer is, it's kind of like a complex thing where it's really down to the content creator wants, typically they're building it for themselves. And typically they want to create an experience for themselves. So one content creator might have to write a thousand words describing, let's take a science fiction scenario. Let's say, okay, you're on a spaceship and you're going off into space and your crew, these are your crew members. You've got one that's really friendly, one that's really mean, and you're the new cadet and you want to rise to the top. And they can really go into great detail, right? And then you can give that to like a Lama 70B. And Lama 70B will do a pretty good job of adhering to the prompt and the user will have a good experience. Okay. Very few users will ever go to that level of content creation. If instead the user, we can really make the AI understand the user more so that rather than having to use a thousand characters or a thousand tokens to describe the scenario, we can just say, look, you're on a spaceship. You've got three crewmate. It's going to be dramatic and there should be some fighting. And then the AI gives you an even better experience. Then the content creator is happier. And so fundamentally, the way I'd kind of think about it. Is there's the sterability of the AI. And so a lot of the work we do at Chai is really about saying we want the AI to react to the user and react to the content creator in the way that they most want. One kind of like analog would be TikTok. I think the thing that TikTok did insanely good was they made it really easy for like anyone. If you make a video on TikTok, almost anyone can make a kind of fun video really easy. You just put some music on the top of it. You throw some of the. Animations on top and it's not hard to have a pretty fun thing. And I think that's much more like the Chai style where it's like users don't want to have to work. You know, if your content is only good, if you have like Shakespeare, it's better if, if just anyone at home can make the, can make the thing. So that's, that's kind of like my answer to the silly talent style. And I think the right answer is how do you get the silly time people fine tuning models that create a really special effect.

Alessio [01:04:46]: As we wrap this is kind of the call for action.

William [01:04:49]: Uh, part one, you have Chai Grant, which I think a lot of people don't know about, which is grants for open source projects, any ideas, any projects that you want to see people work on the should apply or let me think, I think, um, so we do try Chai Grant and fundamentally, you know, we give cash, no strings attached. It's kind of our way of doing two things. One, giving back and support in the community. We've benefited from a lot of open source packages. A lot of our developers and engineers are like. Really? Really pro open source. And then also it's a great way to just meet talented people and, and like expand connections. So with respect to Chai Grant, if anyone's got any sort of, um, GitHub project, any sort of thing they built that they're proud of, just apply, just apply. It's like no strings attached cash and people have a pretty high success rate. So that's the first thing. Other call to actions would be, I think Chai is this, you know, it's a startup. We're a small team. It's like 15 people. We work very intense. It's a very hardcore. Sort of environment, which we found that a lot of people don't like. They don't like the, you know, they'll ask us this concept of what life balance one time. A person said, they said something like, I can't get this done because I'm taking PTO on Friday. And I said, what is PTO? Okay. Um, it stands for paid time off and this, I know what it is and this person was gone. They didn't like, they were no longer in the company four weeks on legally. I think you have to, oh, it's true. There's no problem. Look, if you've got. You've got to take a day off, right? We all have personal lives, right? But it's about this idea of responsibility. If you're not in the office on Friday, you still have your responsibilities. So I don't care if you work hard Thursday to get it wrapped up. I don't care if you're working hard Saturday to get it wrapped up. It's not an excuse to, it's not an excuse. The way this individual spoke about it, it was like an excuse. I think it's an environment, very talented engineers working very hard in an intense space. It's the thing that gets me excited. It's, it's why I think, you know, I really love working at Chai is because it's a place of talent. It's a place of people working super hard. So yeah, I think people who have got, who've worked at startups and they, they love that. That's what they, they want the taste of, I think they should reach out, they should apply. And I think 90% of people can say that sounds terrible. Don't apply.

swyx [01:07:03]: It's not for them.

Alessio [01:07:03]: Yeah, it's exactly, exactly. Yeah. I just realized we skipped one important part. So you spent $10 million on compute last year. You say you're going to probably triple that. Yeah. I'm sure you're doing a lot of work on custom kernels, kind of like inference optimization, any cool stuff. Yeah. That you want to share there. Yeah.

William [01:07:20]: Lots of cool stuff. So really quickly, I think inference is very, very important. It's super important. It's massively underlooked and we can look at all the different foundation models and the techniques, the differences in the foundation models on how well they perform from a cost perspective with inference. Mixture of experts, for example, tend to do really, really good from like a cost perspective. We've worked with a very talented team called.

swyx [01:07:49]: MK1 and we, so I saw, I saw them in the Chaiverse logs. What are they?